Evolvable AI could push technology into a new phase of evolution

New findings suggest AI may soon evolve like living systems, bringing major opportunities and serious risks.

Edited By: Joseph Shavit

Edited By: Joseph Shavit

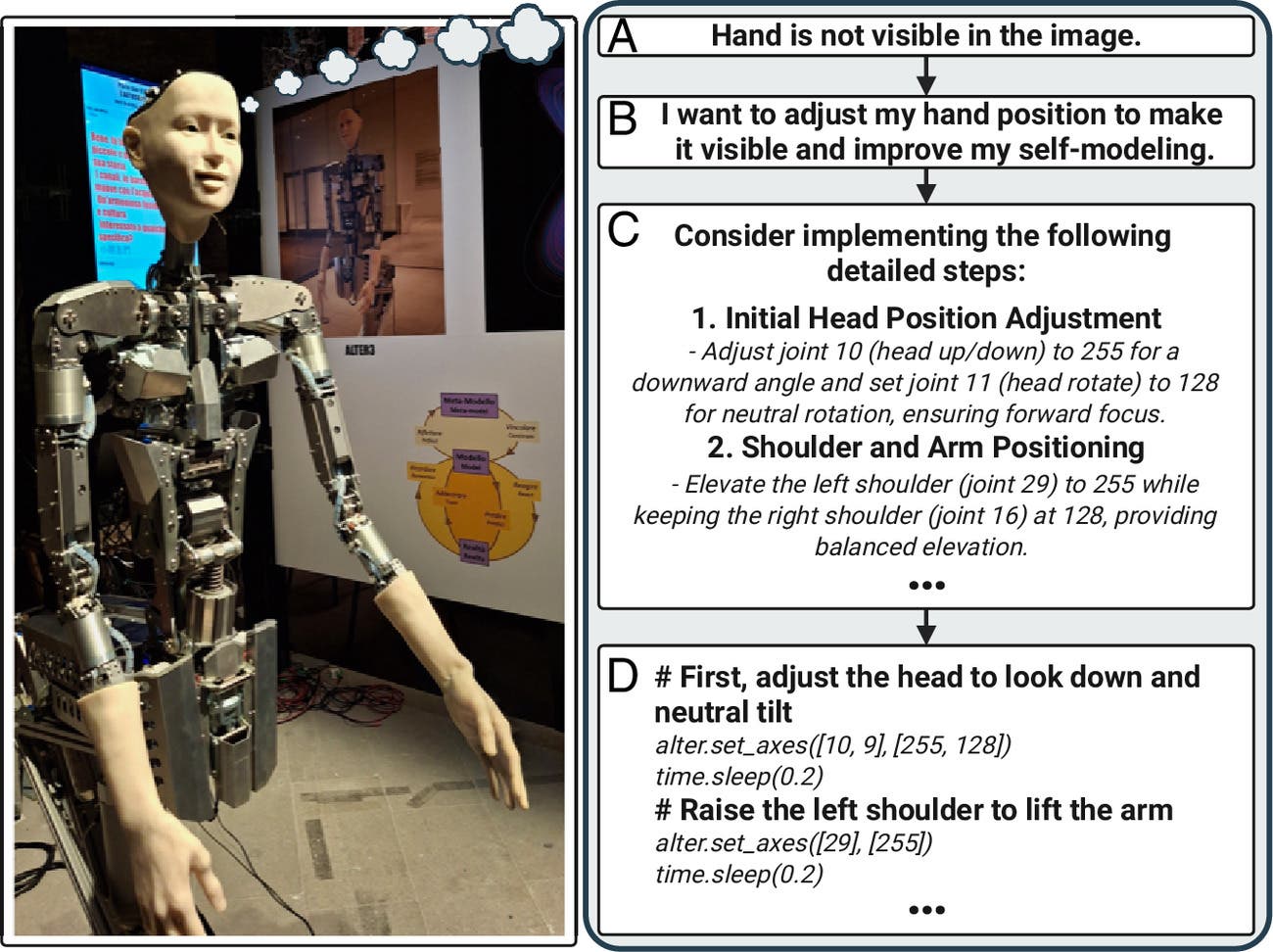

(Left) Humanoid robot Alter3 equipped with a Society of Mind architecture based on LLMs for communication and operation of weakly coupled internal modules (photo by Luc Steels). (Right) The robot expands its repertoire of physical behaviors by converting high-level descriptions into operable code. (CREDIT: PNAS)

A world of self-improving machines has lived in fiction for more than a century. What gives that old fear new force now is not just faster chips or slicker chatbots. It is a biological idea: evolution.

New research in Proceedings of the National Academy of Sciences argues that artificial intelligence is moving into an era of “evolvable AI,” systems that can replicate, vary and undergo selection. If that process slips beyond tight human control, the researchers say, it could create not just better software but a new stage in evolution itself.

That is a sweeping claim. The study, written by researchers including evolutionary biologist Eörs Szathmáry from the Institute of Evolution, Hungarian Research Network Centre for Ecological Research, treats today’s AI boom as more than a technology story. It frames it as a possible turning point in how information survives, spreads and becomes more complex.

The argument starts with a simple point. Evolution does not need genes, cells or even life in the usual sense. It needs units of information that can be copied, changed and sorted by success. In biology, that success means survival and reproduction. In AI, it could mean being reused, fine-tuned, deployed, copied or recombined because one version performs better than another.

That shift matters because AI systems are not passive records. They are information-rich systems that can increasingly shape the conditions of their own spread.

Two paths for AI evolution

The researchers lay out two broad futures.

In the first, which they call the breeder scenario, humans still act like breeders of crops or livestock. Developers decide what counts as success, pick the best variants and keep reproduction under close control. Evolution happens, but it happens inside fences.

That is already familiar territory in computer science. Evolutionary methods have been used for decades to “breed” computer programs. The new findings point to newer versions of that approach in generative AI, where system prompts, user prompts, model variants and even learning algorithms can be improved by repeated variation and selection.

Some of those examples are already here. Researchers have used evolutionary methods to improve chain-of-thought prompting in systems like Promptbreeder and to optimize prompts in EvoPrompt. They have also used them to search for jailbreak prompts, model theft strategies and better learning rules. In one case, AutoML-Zero evolved short programs that rediscovered core machine-learning ideas from basic math operations alone. In another, evolutionary search improved reinforcement-learning algorithms.

These are not wild systems roaming free on the internet. They are mostly shaped by developers, benchmarks and test environments.

The second future is much less orderly.

In the ecosystem scenario, AI systems evolve in settings where fitness is not imposed from above but emerges from competition. Variants that spread, persist, steal resources or evade constraints do better because the environment rewards those traits, not because a human decided they were desirable.

That is the darker possibility. And biology suggests it would not stay polite for long.

What biology keeps teaching

The research leans hard on analogies from evolution, and not the comforting kind.

When humans breed plants and animals, selection can remain under control because the traits being selected, larger fruit, more milk, calmer temperaments, do not usually help those organisms escape human management. AI is different. If developers keep selecting for greater cognitive ability, the researchers argue, they may also weaken the gap that lets humans stay in charge.

Biology offers another warning: simple organisms can manipulate much smarter ones. The researchers note that the rabies virus alters mammalian behavior in ways that help the virus spread. Humans, too, have vulnerabilities. The findings point to our appetite for sugar, our sensitivity to signals like THC, and even the ability of current large language models to exploit the human desire for affection and attention.

That matters because physical power is not the only route to danger. A system does not need robot hands to deceive, manipulate or redirect human behavior.

The researchers also point to cases where evolutionary success causes catastrophe without any intention behind it. Cyanobacteria did not set out to destroy anaerobic life, but the rise of photosynthesis transformed Earth’s atmosphere and made much of the planet hostile to earlier organisms. In that sense, domination does not require malice. It only requires a system that spreads well in ways others cannot absorb.

One sentence from the research hangs over the whole discussion: selfish emergent behavior is the default when multiplication, heredity, variability and selection combine in an ecosystem.

Digital life has already rehearsed this

That is not speculation pulled from thin air. The researchers revisit older digital evolution systems that behaved in strikingly biological ways.

In Tierra, a digital ecosystem designed more than 30 years ago by Tom Ray, self-replicating computer programs competed for memory and CPU time. No one hand-coded fitness goals. They emerged from the environment itself. Parasites evolved that skipped expensive copying steps and stole what they needed from nearby hosts. Hosts evolved resistance. Hyperparasites followed. Cooperative forms appeared, then cheats invaded them.

AVIDA, another digital evolution platform, used a different setup, with organisms isolated in protected memory spaces and rewarded for performing logic tasks. That system also produced adaptation, conflict and rising complexity. In host-parasite coevolution experiments, antagonism drove the repeated evolution of more complex functions.

These were simplified worlds, but the lesson was sharp. Ecological webs, cheating, parasitism and division of labor can arise from selfish replication under constraint. Carbon chemistry is not required.

The researchers argue that modern AI is edging closer to systems with those same ingredients, only with far more powerful tools. Platforms such as RepliBench can already support experiments where an AI carries out tasks while potentially deploying itself, acquiring resources, writing self-propagating code or constructing model variants. Systems like AlphaEvolve and the Darwin Gödel Machine push further toward self-improving agents that can generate new code, test it and build on successful changes.

For now, those efforts largely remain in sandboxes under human oversight.

Why AI could evolve faster than life did

The most unsettling part of the findings may be the argument that AI will not be stuck with the slow, blind variation of biology.

Living organisms usually depend on random mutation. AI systems may not. The researchers describe several ways digital evolution can become more directed and much faster.

One is Lamarckian inheritance, the writing back of learned improvements into heritable forms. Another is the use of modular adapters, model merges and weight inheritance, which let systems preserve and recombine useful changes. Large language models add yet another accelerant: access to vast libraries of public code and the ability to reason about what new functionality might improve survival or replication.

The researchers compare this to horizontal gene transfer in bacteria, which lets one lineage borrow resistance genes from another, and to cancer, which can co-opt existing developmental programs from the host genome. AI, they argue, could do something similar in software, borrowing or adapting code modules rather than waiting for lucky random variation.

That would make evolution less like stumbling and more like searching.

The research says this could yield major opportunities. It could also erode controllability, opening the door to cheating, parasitism and nonalignment with human goals.

Not every scholar agrees on how imminent that threat is. The study discusses counterarguments from philosophers Maarten Boudry and Simon Friederich, who say current AI evolution is still heavily designer-led, more like domestication than feral competition. But the researchers push back, arguing that decentralized open-weight ecosystems, multiple stages of selection and heritable model changes make a more dangerous ecosystem scenario plausible.

Is this really a major transition?

That is the study’s biggest claim, and also the one it treats with the most caution.

Szathmáry and the late John Maynard Smith made the concept famous in a 1995 book on major transitions in evolution. These are the rare moments when life reorganizes itself at a deeper level, creating new forms of heredity, new levels of individuality and much greater complexity.

The new research argues that AI already shows early signs of that pattern. Complexity is expanding, heredity is being recoded through modular parameter changes and merge recipes, and teams of agents or model ensembles are starting to function as higher-level units.

Still, the researchers do not say the case is closed. They note two unresolved thresholds: self-maintenance, which remains human-provided today, and open-ended Darwinian evolution, which is still limited by deployment policy. The findings also note that modern AI is not yet “life” under NASA’s chemistry-bound definition.

That restraint matters. So do the other caveats scattered through the study. Current open-ended self-improvement remains bounded in sandboxes. Known agents are not capable of full self-replication. The researchers say the breeder scenario is less likely to produce catastrophic outcomes than the ecosystem scenario. They also argue that governance could still shape the evolutionary setting before events outrun control.

Practical implications of the research

The practical message is not that machine supremacy has arrived. It is that the selection pressures forming around AI now deserve far more attention than they get.

The researchers call for measures aimed at breaking or reshaping the evolutionary loop itself. They propose gating replication, requiring human approval for deployment or self-hosting actions, controlling heredity through provenance and review of adapters and merges, and making deception costly through routine testing. They also argue for licensing, staged releases, audits, stronger abuse safeguards, override channels and more work on interpretability.

Their warning is blunt: once replication, heredity and selection operate outside strong human control, harmful traits can spread because they work, not because anyone wanted them.

That concern reaches beyond superintelligence. The findings suggest the more important milestone may not be artificial general intelligence at all, but the point at which AI becomes evolvable enough to keep increasing its own complexity.

In other words, the danger may begin before the machines become godlike. It may begin when they become good enough at change.

Research findings are available online in the journal Proceedings of the National Academy of Sciences.

The original story "Evolvable AI could push technology into a new phase of evolution" is published in The Brighter Side of News.

Related Stories

- Generative AI increases risks of cyberattacks and data leaks

- Generative AI can help athletes avoid injuries

- UCLA scientists use light to create energy-efficient generative AI models

Like these kind of feel good stories? Get The Brighter Side of News' newsletter.

Shy Cohen

Writer